TL;DR:

ServiceNow platforms collapse under their own weight when configuration management lacks discipline. Inconsistent naming conventions create 40% longer troubleshooting cycles. Undocumented changes trigger cascading failures. Configuration drift silently erodes stability until a critical update fails spectacularly. The solution? Treat configuration management like critical infrastructure; standardised naming frameworks, comprehensive documentation, environment segregation, and automated baseline monitoring that catches drift before it becomes disaster.

Executive Summary

The Problem

Your ServiceNow platform is expanding rapidly. New applications, integrations, custom workflows, each addition makes the environment more powerful and more fragile. Platform Administrators create business rules with inconsistent naming. Developers document changes in scattered emails. Change Managers approve modifications without understanding downstream impacts. Configuration drift accumulates silently; a modified workflow here, an undocumented integration there, a 'temporary' workaround that becomes permanent.

Then deployment day arrives. A routine update to the Service Portal breaks three unrelated workflows. Nobody knows why. The documentation doesn't match production. The Platform Owner discovers that 'temporary' changes from six months ago are now business-critical. Rollback isn't possible because nobody documented the baseline state. Your platform has become a house of cards, technically functional but strategically fragile.

Configuration chaos doesn't announce itself with alarms. It accumulates gradually until a critical moment exposes the fragility you've been ignoring.

"Configuration chaos doesn't announce itself with alarms. It accumulates gradually until a critical moment exposes the fragility you've been ignoring."

The Solution

Scalable configuration management transforms your ServiceNow platform from fragile to resilient through four foundational practices. First, implement naming convention frameworks that make every configuration element instantly identifiable, no more hunting through business rules wondering which one controls approval routing. Second, establish comprehensive documentation standards using Knowledge Management to capture not just what changed, but why it changed and what it affects. Third, enforce environment segregation with rigorous promotion paths from Development through Test to Production, using Flow Designer and Integration Hub to automate deployments and eliminate manual errors. Fourth, implement automated baseline monitoring through Performance Analytics dashboards that detect configuration drift before it triggers incidents.

This isn't bureaucracy, it's the infrastructure that allows your platform to scale without collapsing. High-performing ServiceNow teams don't spend weekends troubleshooting mysterious failures. They've built configuration management disciplines that prevent problems rather than react to them.

Key Business Outcomes

Reduce troubleshooting time by 40% through consistent naming conventions that make configuration elements instantly identifiable

Eliminate 60-70% of deployment failures caused by undocumented dependencies and configuration drift

Accelerate change approval cycles by 30% when Change Managers can quickly assess impact through comprehensive documentation

Achieve 95%+ configuration accuracy between environments through automated promotion and baseline validation

Reduce platform-related incidents by 50% within six months through proactive drift detection and remediation

The Infrastructure That Determines Whether Your Platform Scales or Collapses

Your ServiceNow platform's technical capabilities don't determine its success. Configuration management discipline does.

You've invested hundreds of thousands in licensing. Your Platform Architect designed elegant solutions. Your Technical Lead built sophisticated integrations. But if three different developers use three different naming conventions for business rules, if critical workflows lack documentation, if nobody tracks what changed between Test and Production, your platform is one deployment away from chaos.

Configuration management isn't the glamorous part of ServiceNow administration. It's the unglamorous discipline that separates platforms that scale from platforms that collapse. Let's examine how to build that discipline into your foundation.

These four foundational practices transform your ServiceNow platform from fragile to resilient, preventing configuration chaos.

Establishing Naming Convention Frameworks That Scale

Inconsistent naming conventions don't just annoy Platform Administrators, they create measurable operational drag. When a Service Desk Analyst reports that approval workflows are failing, how long does it take your team to identify which of the forty-seven business rules named 'Approval_Check', 'Check_Approval', or 'ApprovalValidation' is causing the problem?

📊 DATA INSIGHT Inconsistent naming conventions create 40% longer troubleshooting cycles. When Platform Administrators must hunt through dozens of business rules with names like 'Approval_Check', 'Check_Approval', or 'ApprovalValidation', what should take 10 minutes takes 45. Multiply this across hundreds of troubleshooting sessions annually, and you're losing weeks of productivity to preventable confusion.

Effective naming conventions follow three principles: consistency, descriptiveness, and hierarchy.

Consistency means every configuration element follows the same pattern. Business rules use the format: [Module]_[Function]_[Sequence]. For instance: RITM_ApprovalRouting_010, RITM_ApprovalRouting_020, RITM_ApprovalRouting_030. The module prefix (RITM) immediately identifies this affects Request Management. The function descriptor (ApprovalRouting) explains what it does. The sequence number (010, 020, 030) indicates execution order and allows for insertions without renumbering.

Descriptiveness means names communicate purpose without requiring documentation lookup. RITM_ApprovalRouting_010 tells you more than BR_12345 ever could. When your Change Manager reviews a deployment package at 4pm on Friday, descriptive names mean they can assess impact without hunting through technical specifications.

Hierarchy means your naming structure reflects your platform architecture. Group related elements through prefixes:

HR_ for Human Resources applications

FIN_ for Finance workflows

IT_ for ITSM processes

FAC_ for Facilities management

This hierarchical approach extends beyond business rules. Apply it to:

Service Catalog items: CAT_IT_HardwareRequest_Laptop

Flow Designer flows: FLOW_RITM_ApprovalEscalation

Integration Hub spokes: INT_Workday_EmployeeSync

Knowledge Management articles: KB_IT_PasswordReset_Instructions

The Platform Administrator who implements these conventions saves their successor hundreds of hours. More importantly, they create a platform where troubleshooting takes minutes instead of hours, where impact analysis is straightforward instead of guesswork, where new team members become productive in weeks instead of months.

Building Documentation Standards That Preserve Institutional Knowledge

Configuration changes without documentation are institutional amnesia. Six months from now, when that 'temporary' workaround is causing mysterious failures, who remembers why it was implemented? What business requirement justified the deviation from standard practice? What other systems depend on this behaviour?

Comprehensive documentation captures three critical elements: rationale, dependencies, and rollback procedures.

Rationale explains the business context. Don't just document what changed, document why it changed. When your Platform Owner reviews a business rule that bypasses standard approval routing, the documentation should explain; 'Emergency procurement process for critical infrastructure failures. Approved by Finance Director 15/03/2024. Requires VP approval within 24 hours. Review quarterly to assess continued necessity.'

That context transforms documentation from compliance checkbox to strategic asset. When business requirements evolve, you can assess whether exceptions remain justified. When auditors question deviations from standard process, you have business justification documented. When new Platform Administrators join the team, they understand not just the technical implementation but the business reasoning.

Dependencies map the ripple effects. That business rule modification doesn't exist in isolation, it affects downstream workflows, triggers notifications, updates CMDB records, feeds Performance Analytics dashboards. Document these connections explicitly:

Upstream triggers: What events activate this configuration?

Downstream impacts: What processes depend on this behaviour?

Integration touch points: What external systems interact with this element?

Data flows: What records does this create, modify, or delete?

Use Knowledge Management to structure this documentation. Create article templates that enforce consistency:

Documentation Element | Purpose | Example Content |

|---|---|---|

Configuration Element Name | Unique identifier following naming conventions | RITM_ApprovalRouting_030 |

Business Justification | Why this change is necessary | Emergency procurement process for critical infrastructure failures requiring expedited approval |

Technical Implementation | How it works technically | Business rule triggers on RITM state change, bypasses standard routing for items tagged 'emergency', routes to VP approval queue |

Upstream Dependencies | What triggers this configuration | Service Catalog item selection, Emergency flag set to true, RITM state = 'Pending Approval' |

Downstream Impacts | What this affects | Approval notification emails, SLA calculations, Performance Analytics approval metrics, Finance audit reports |

Testing Verification | How to confirm it works | Submit test request REQ0012345 with emergency flag, verify VP receives notification within 5 minutes, confirm approval completes workflow |

Rollback Procedure | How to undo if needed | Deactivate RITM_ApprovalRouting_030, reactivate RITM_ApprovalRouting_025, clear cache, test with REQ0012345 |

Review Schedule | When to reassess necessity | Quarterly review with Finance Director, next review 15/06/2025 |

Rollback procedures are your insurance policy. When a deployment goes wrong at 2am, your exhausted Platform Administrator shouldn't be improvising recovery steps. Document the exact sequence to restore previous functionality:

Deactivate business rule RITM_ApprovalRouting_030

Reactivate business rule RITM_ApprovalRouting_025 (previous version)

Clear Service Portal cache

Verify approval routing through test request REQ0012345

Monitor Performance Analytics dashboard 'Approval Cycle Time' for 24 hours

This level of documentation feels excessive until the moment you desperately need it. High-performing platform teams document obsessively not because they enjoy paperwork, but because they've learned that undocumented changes create technical debt that compounds with interest.

"High-performing platform teams document obsessively not because they enjoy paperwork, but because they've learned that undocumented changes create technical debt that compounds with interest."

Implementing Environment Segregation and Promotion Discipline

Your Development instance is a playground. Your Production instance is a factory floor. Treating them the same is organisational malpractice.

Environment segregation creates safe spaces for experimentation whilst protecting operational stability. The standard three-tier model (Development, Test, Production) serves different purposes:

Development is where Platform Administrators and developers experiment freely. Break things. Try unconventional approaches. Build prototypes. The Development instance should mirror Production architecture without containing production data or affecting live users. This is where you discover that your elegant workflow design has an edge case that triggers infinite loops.

Test is where you validate that Development experiments actually work. This environment should contain realistic data volumes (sanitised production data or generated test data) and mirror Production configuration. Your Business Analysts conduct user acceptance testing here. Your Integration Specialists verify that API connections behave correctly under load. Your Change Manager reviews deployment packages before approving Production promotion.

Production is sacred. Changes reach Production only after passing Development experimentation and Test validation. No 'quick fixes' applied directly. No 'temporary' workarounds that bypass promotion discipline. No exceptions for urgent requests, if it's truly urgent, you expedite the promotion process, you don't bypass it.

⚠️ COMMON PITFALL The "urgent exception" trap destroys environment discipline faster than any other factor. When executives pressure teams to bypass Test for "just this one critical fix," you're not saving time, you're gambling with platform stability. If it's truly urgent, expedite the promotion process with compressed timelines and additional oversight. Never bypass it entirely.

Enforce this discipline through Flow Designer automation. Build promotion workflows that:

Capture configuration changes in Development through Update Sets

Require Platform Administrator review and documentation completion

Automatically deploy to Test environment

Trigger notification to Business Analyst for UAT

Require Change Manager approval before Production deployment

Execute automated validation tests post-deployment

Roll back automatically if validation fails

Use Integration Hub to connect your promotion workflow with external tools. Trigger Slack notifications when deployments reach Test. Create Jira tickets for UAT tracking. Update your CMDB with deployment records. This automation transforms environment management from manual checklist to enforced discipline.

The Platform Owner who implements rigorous environment segregation prevents the catastrophic failures that destroy stakeholder confidence. When your CFO asks why the Finance approval workflow broke, 'We deployed an untested change directly to Production' is a career-limiting explanation. 'Our automated promotion process caught the issue in Test and prevented Production impact' is a credibility-building one.

Establishing Baseline Management and Drift Detection

Configuration drift is the silent killer of platform stability. It doesn't announce itself with error messages. It accumulates gradually; a modified workflow here, an updated business rule there, a 'temporary' integration change that becomes permanent. Each individual change seems harmless. Collectively, they transform your platform from known-good state to unknown-fragile state.

Baseline management captures your platform's known-good configuration at critical moments. After successful deployments, after major upgrades, after significant architectural changes, capture a baseline. This snapshot becomes your reference point for detecting drift.

ServiceNow's Configuration Management Database (CMDB) provides the foundation. Configure it to track:

Business rules and their execution order

Flow Designer flows and their active/inactive state

Service Catalog items and their availability

Integration Hub connections and their authentication status

Service Portal widgets and their dependencies

Access Control Lists (ACLs) and their permission structures

Use Performance Analytics to monitor baseline adherence. Build dashboards that track:

Configuration elements modified outside approved change windows

Business rules created without corresponding documentation

ACL changes that expand permissions beyond approved roles

Integration connections that bypass standard authentication

Service Portal modifications that affect multiple user groups

Set thresholds that trigger alerts. When configuration drift exceeds acceptable levels say, more than five undocumented business rule modifications in a week, notify the Platform Owner and Platform Administrator. Don't wait for drift to cause incidents. Detect and remediate proactively.

Implement weekly configuration audits. Your Platform Administrator reviews drift reports, identifies unauthorised changes, and either documents them retroactively (if justified) or reverts them (if not). This discipline prevents drift from accumulating into crisis.

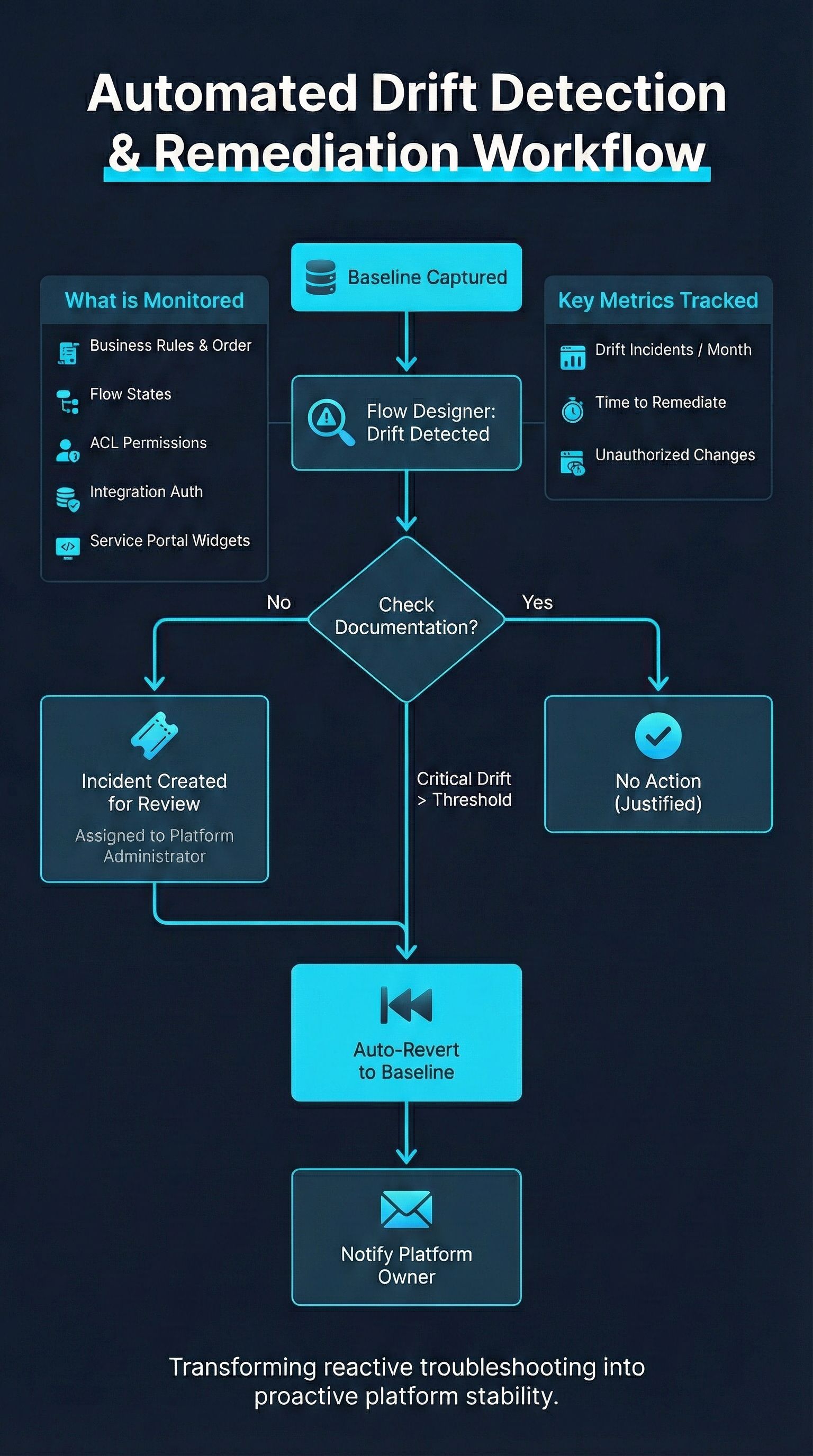

The most sophisticated platform teams automate drift remediation. Use Flow Designer to build self-healing workflows:

Detect configuration deviation from baseline

Assess whether change has approved documentation

If undocumented, create incident assigned to Platform Administrator

If deviation exceeds severity threshold, automatically revert to baseline

Notify Platform Owner of remediation action

Require formal change request to re-implement if change was justified

This automation transforms baseline management from periodic audit to continuous enforcement. Your platform maintains stability not through heroic troubleshooting efforts, but through systematic prevention of the conditions that cause instability.

A deep dive into the automated workflow for detecting and remediating configuration drift, transforming reactive troubleshooting into proactive platform stability.

Integrating Configuration Management with Change Governance

Configuration management and change governance aren't separate disciplines, they're two sides of the same coin. Your Change Management process should enforce configuration standards, and your configuration standards should feed change approval decisions.

Build this integration through Change Management module configuration. Require that every change request includes:

List of configuration elements affected (auto-populated from Update Sets)

Documentation links from Knowledge Management

Baseline comparison showing before/after state

Dependency map showing upstream and downstream impacts

Rollback procedure with specific steps

Validation criteria for post-deployment testing

Use Flow Designer to automate change impact analysis. When a Change Manager receives an approval request, trigger workflows that:

Query CMDB for dependencies of affected configuration elements

Identify other active changes that might conflict

Check Performance Analytics for recent incidents related to affected components

Generate risk score based on scope, dependencies, and recent stability

Recommend approval, additional review, or rejection based on risk assessment

This automation doesn't replace Change Manager judgement, it augments it. The Change Manager still makes the final decision, but they make it with comprehensive impact analysis that would take hours to compile manually.

Track configuration management metrics through Performance Analytics:

Percentage of changes with complete documentation

Average time from change approval to deployment

Deployment success rate by environment

Configuration drift incidents per month

Time to detect and remediate unauthorised changes

Review these metrics quarterly with your Platform Owner. Identify trends. Celebrate improvements. Address persistent gaps. Use data to demonstrate the business value of configuration discipline.

Scaling Configuration Management as Your Platform Grows

Configuration management standards that work for a 50-user pilot collapse under the weight of enterprise-scale deployment. Plan for scale from the beginning. As your platform expands, consider:

Federated administration: When your platform serves multiple business units, each with dedicated Platform Administrators, establish clear boundaries. HR applications follow HR naming conventions. Finance workflows follow Finance documentation standards. IT services follow IT baseline requirements. But all follow the overarching framework that enables cross-functional integration.

Automated compliance checking: Manual configuration audits don't scale to platforms with thousands of business rules and hundreds of integrations. Invest in automated scanning tools that validate naming convention compliance, documentation completeness, and baseline adherence. Use Flow Designer to build custom compliance checks specific to your organisation's standards.

Configuration as code: For complex configurations, treat ServiceNow like software development. Store configuration in version control systems. Use CI/CD pipelines to automate deployment. Implement code review processes for significant changes. This approach scales configuration management to enterprise complexity whilst maintaining the discipline that prevents drift.

Specialised roles: As your platform team grows, assign specific configuration management responsibilities. Designate a CMDB Manager who owns baseline management. Assign Integration Specialists who enforce connection standards. Empower Process Owners who validate that configuration changes align with business process requirements.

The Platform Owner who plans for scale avoids the painful re-platforming that occurs when configuration chaos becomes unsustainable. Better to implement scalable standards when you have 100 configuration elements than to retrofit them when you have 10,000.

The Discipline That Separates Mature Platforms from Fragile Ones

Configuration management isn't glamorous. It's the discipline that nobody notices when it works and everyone blames when it fails. It's the difference between platforms that scale gracefully and platforms that collapse under their own complexity.

You've seen the alternative; the 2am emergency calls when deployments fail mysteriously. The hours spent hunting through undocumented business rules. The stakeholder confidence eroded by preventable incidents. The technical debt that compounds until re-platforming seems easier than remediation.

Scalable configuration management transforms that chaos into clarity. Consistent naming conventions that make troubleshooting straightforward. Comprehensive documentation that preserves institutional knowledge. Environment segregation that protects Production stability. Baseline management that detects drift before it causes incidents. Change governance that enforces discipline without stifling innovation.

This is the infrastructure that allows your ServiceNow platform to become the strategic asset your organisation needs. Not just technically capable, but operationally resilient. Not just functionally rich, but strategically reliable.

But this article provides only the foundation. The real transformation happens when you implement configuration management frameworks tailored to your organisation's specific complexity; naming convention templates that reflect your business structure, documentation standards that capture your unique requirements, promotion workflows that integrate with your existing tools, baseline monitoring that aligns with your risk tolerance.

That's where The Platform Operating Manual comes in. Our detailed implementation guides show you exactly how to build configuration management discipline that scales, complete with naming convention templates, documentation article structures, Flow Designer automation blueprints, Performance Analytics dashboard configurations, and change governance integration frameworks. We'll show you how to gain buy-in from resistant Platform Administrators who view documentation as bureaucracy, how to balance standardisation with the flexibility that innovation requires, and how to evolve your configuration management practices as your platform matures.

Don't let configuration chaos undermine your platform's potential. Check back in with The Platform Operating Manual soon and transform fragility into resilience.

Did you know?

The Apollo Guidance Computer that navigated astronauts to the Moon in 1969 contained approximately 145,000 lines of code, remarkably modest by modern standards. Yet NASA's configuration management discipline was extraordinary. Every single line of code was documented with meticulous detail; who wrote it, why it was necessary, what it affected, and how to verify its correctness. Engineers used a 'rope core memory' system where software was literally woven into hardware by hand, making errors catastrophically expensive to fix.

Margaret Hamilton, who led the software engineering team, implemented rigorous testing protocols that would seem excessive even today. Every code change underwent multiple levels of review. Simulations tested edge cases that seemed impossibly unlikely. Documentation captured not just what the code did, but the reasoning behind every decision. When Apollo 11's lunar module computer triggered alarms during descent, a scenario the team had anticipated and documented, Neil Armstrong and Buzz Aldrin knew exactly how to respond because the configuration management discipline had prepared them for contingencies.

The lesson for ServiceNow platforms? When the stakes are high enough, organisations find the discipline to implement configuration management rigorously. Your platform might not be landing astronauts on the Moon, but it's running business processes that your organisation depends on. The question isn't whether configuration management discipline is worth the effort, it's whether you'll implement it proactively or learn its value through painful failures.